Deploy Unravel on Snowflake with continuous monitoring of data

Snowflake provides a secure sharing option within the same region with strong security. Unravel leverages the Snowflake data sharing with secure data sharing option for data transfer over shared connections outside the Virtual Private Cloud (VPC) or through private links. This involves employing a serverless/warehouse task to independently replicate metadata from the schema in the customer Snowflake account, allowing you to share metadata directly with the Unravel Snowflake account in the same region. The data is polled and shared every 1 hour by default. The polling can be configured.

For secure data sharing from Snowflake, complete the prerequisites and configure the Secure data share option.

The solution provides the following benefits:

No requirement for a direct connection to Snowflake

Rapid setup and reduced complexity for security permissions

Availability of all Unravel features with configurable latency

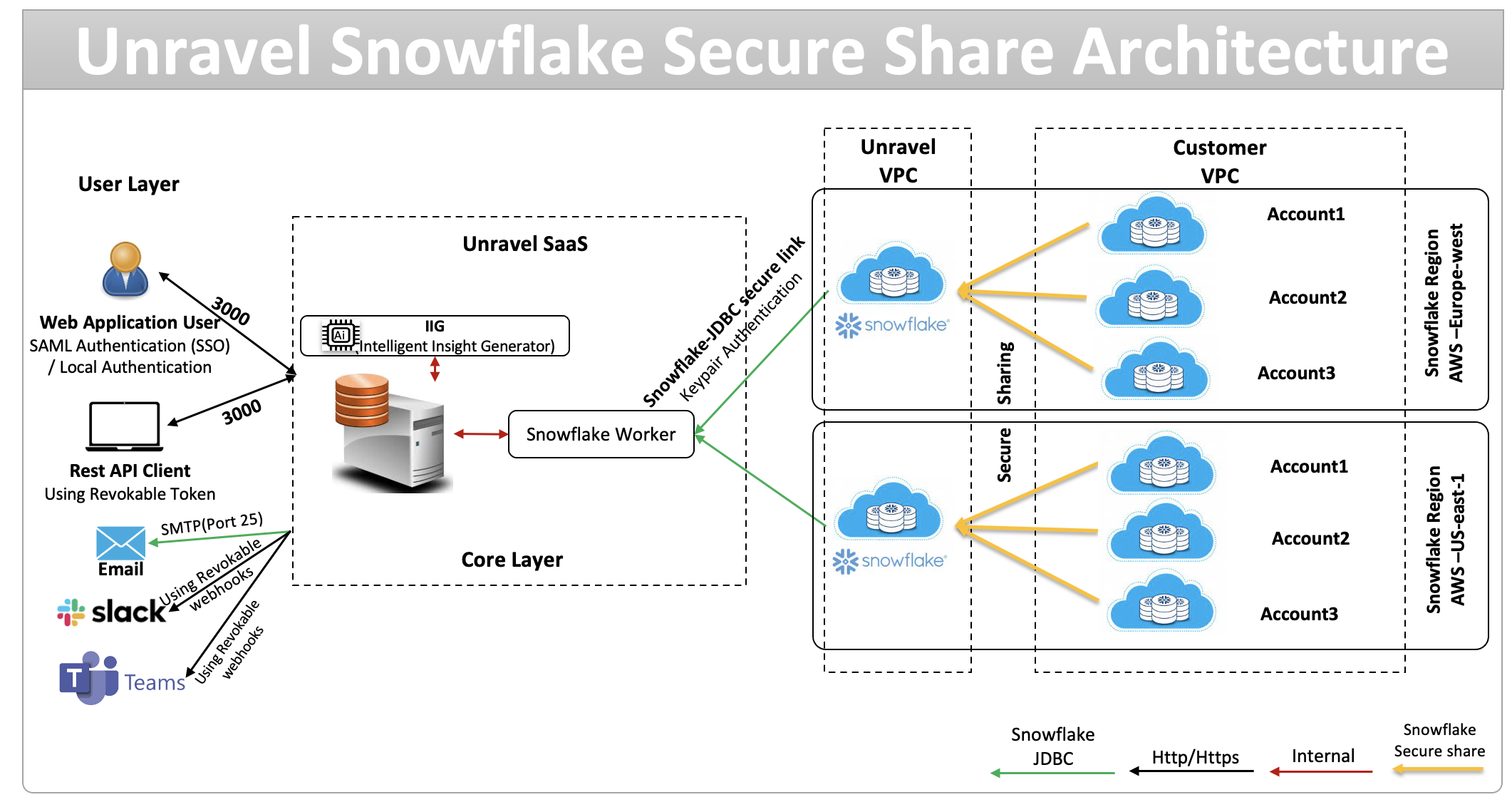

Architecture

Unravel Snowflake Architecture - Secure Share

The Unravel Snowflake Architecture - Secure share is a comprehensive framework designed to integrate with the Snowflake data warehouse, providing users with robust data analytics and management capabilities. The architecture is divided into three main layers: User Layer, Core Layer, and Data Source Layer, each serving distinct purposes and functionalities.

The architecture emphasizes secure communication pathways, including external connections, HTTP/HTTPS traffic, and internal communications, ensuring data integrity and confidentiality. It provides a scalable and efficient platform for data analytics, capable of integrating with various external systems and notifying users through multiple channels

User Layer: This layer interfaces directly with users and clients, offering web application access, REST API interactions, and external notifications through platforms such as Email, Slack, and Microsoft Teams. It ensures secure and authenticated access using username/password for web users and revocable tokens for API clients.

Core Layer: Deployed on virtual machines or cloud services like AWS, Azure, and SaaS, this layer includes critical components such as NGINX for load balancing, a notification service, various analytical and service modules, and a datastore. The Core Layer facilitates seamless data management and insight generation through components like the Intelligent Insight Generator (IIG) and Snowflake Worker. It supports development environments like Python and Java and runs on CentOS.

Data Source Layer: This layer securely connects to the Snowflake data warehouse using a Snowflake-JDBC secure link with local authentication. It handles both shared and customer-specific data, ensuring that data operations are performed within the same region (us-east-1) to optimize performance and reduce latency.

Secure Data Sharing Process

The secure data sharing process in this architecture follows these steps:

Authentication: Users authenticate using SAML-based SSO or local credentials. API clients use revocable tokens, while messaging and email systems connect by secure webhooks or SMTP.

User Request Initiation: Users or applications request data or analytics via the Unravel SaaS web interface or the REST API.

Request Handling: The Snowflake Worker, assisted by the Intelligent Insight Generator, processes these requests and determines the data requirements.

Establish Secure Connection: The Snowflake Worker creates a secure, encrypted JDBC connection to the Snowflake environment, using keypair authentication.

Initiate Secure Share: The Snowflake environment in the Unravel VPC securely shares required data with customer accounts in the Customer VPC. This is accomplished through Snowflake’s Secure Share feature, providing read-only, reference-based data access.

Multi-Account Access: Multiple customer accounts, distributed across regions, are able to securely access the same shared data while maintaining their own governance and access controls.

Result Delivery and Notification: Processed insights and results are delivered to users through their chosen channels, such as web, API, email, Slack, or Teams.

The following prerequisites must be fulfilled before you use the secured data-sharing Snow-Share Live option:

User running this script should be ACCOUNTADMIN role or access to SNOWFLAKE.ACCOUNT_USAGE.

User should have permissions to create databases, schemas, tables, stored procedures, and tasks in the target Snowflake account to the user.

Review the required task schedule and latency, and make sure the user’s role has enough warehouse credits and resource limits because more frequent task runs increase compute cost.

Get the Unravel Snowflake account ID for the corresponding platform, region, and edition from Unravel before you run the SHARE_TO_ACCOUNT procedure.

Database | Schema | Table Name |

|---|---|---|

Snowflake | Account_usage | WAREHOUSE_METERING_HISTORY |

Snowflake | Account_usage | WAREHOUSE_EVENTS_HISTORY |

Snowflake | Account_usage | WAREHOUSE_LOAD_HISTORY |

Snowflake | Account_usage | COLUMNS |

Snowflake | Account_usage | TAGS |

Snowflake | Account_usage | TAG_REFERENCES |

Snowflake | Account_usage | TABLES |

Snowflake | Account_usage | TABLE_STORAGE_METRICS |

Snowflake | Account_usage | METERING_DAILY_HISTORY |

Snowflake | Account_usage | METERING_HISTORY |

Snowflake | Account_usage | DATABASE_REPLICATION_USAGE_HISTORY |

Snowflake | Account_usage | REPLICATION_GROUP_USAGE_HISTORY |

Snowflake | Account_usage | SNOWPIPE_STREAMING_FILE_MIGRATION_HISTORY |

Snowflake | Account_usage | QUERY_HISTORY |

Snowflake | Account_usage | ACCESS_HISTORY |

Snowflake | Account_usage | DATABASE_STORAGE_USAGE_HISTORY |

Snowflake | Account_usage | STAGE_STORAGE_USAGE_HISTORY |

Snowflake | Account_usage | SEARCH_OPTIMIZATION_HISTORY |

Snowflake | Account_usage | DATA_TRANSFER_HISTORY |

Snowflake | Account_usage | AUTOMATIC_CLUSTERING_HISTORY |

Snowflake | Account_usage | QUERY_INSIGHTS |

Snowflake | Account_usage | SESSIONS |

INFORMATION_SCHEMA | AUTO_REFRESH_REGISTRATION_HISTORY | |

INFORMATION_SCHEMA | IS_QUERY_HISTORY | |

INFORMATION_SCHEMA | SHARED_TABLES | |

INFORMATION_SCHEMA | SHARED_VIEWS | |

INFORMATION_SCHEMA | SHARED_COLUMNS | |

Unravel | Unravel schema | REPLICATION_LOG |

Function call get_query_operator_stats() | QUERY_PROFILE | |

Show warehouses command | WAREHOUSES | |

Show warehouse parameter command | WAREHOUSE_PARAMETERS | |

Contact Unravel Support for Unravel account ID corresponding to the platform, region, and edition.

Secure Data Sharing Configuration

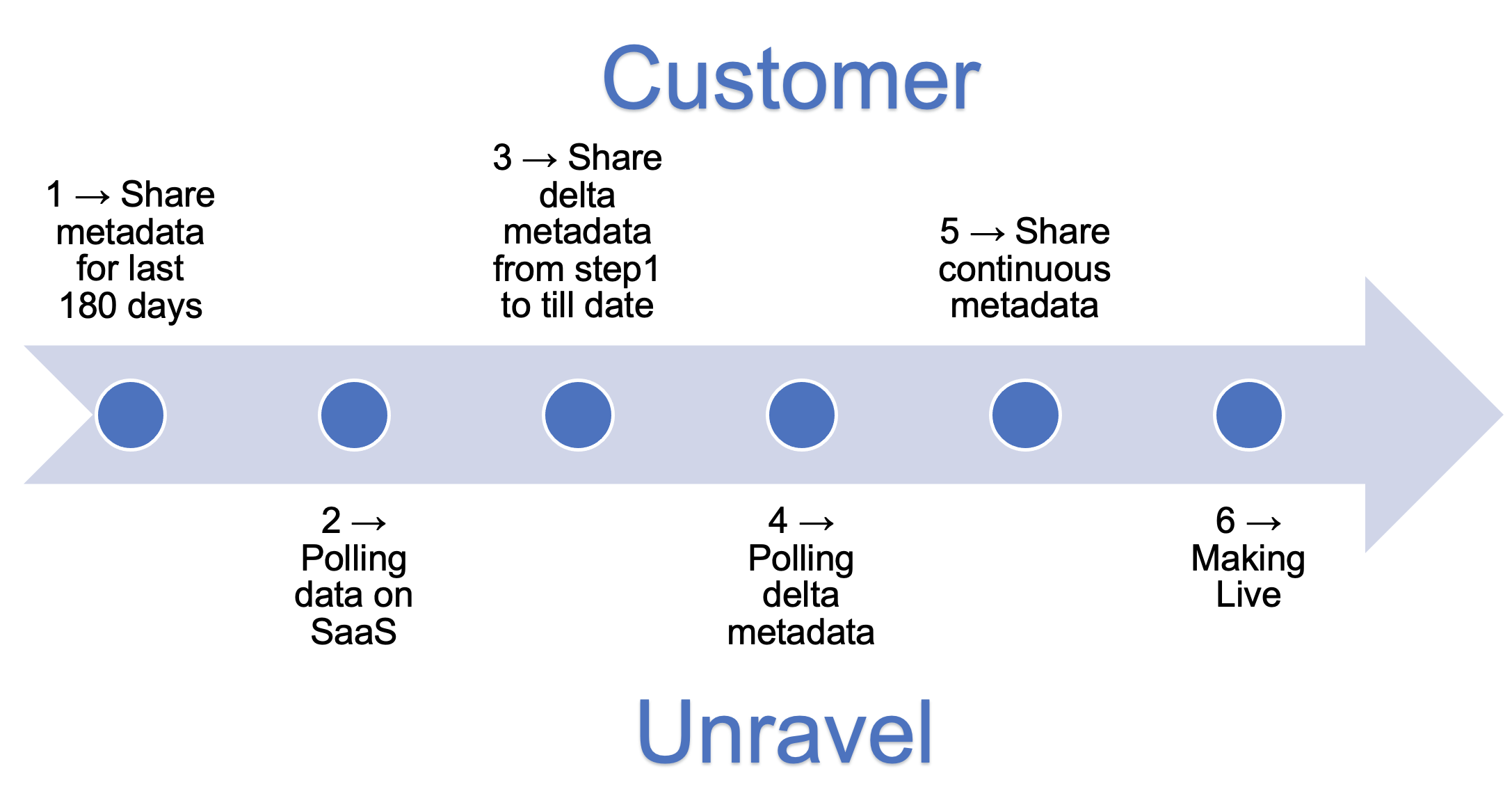

Snow-Share Live: Continuous Data Share

Use the Snow-Share Live option to set up continuous, secure data sharing between your Snowflake environment and Unravel.

To configure the snow-share live option:

Run these six steps in order to enable continuous secure data sharing from Snowflake to Unravel.

Share metadata for last 180 days

Run step 1a from this script to create the Snowflake database, schema, stored procedures, and supporting objects required for secure data sharing with Unravel.

Run the following procedures one time to perform the 180‑day data sharing load and initialize metadata for Unravel.

CALL CREATE_TABLES('UNRAVEL_SHARE','SCHEMA_4827_T'); CALL REPLICATE_ACCOUNT_USAGE('UNRAVEL_SHARE','SCHEMA_4827_T',180); CALL REPLICATE_HISTORY_QUERY('UNRAVEL_SHARE','SCHEMA_4827_T',180); CALL WAREHOUSE_PROC('UNRAVEL_SHARE','SCHEMA_4827_T'); CALL CREATE_QUERY_PROFILE('UNRAVEL_SHARE', 'SCHEMA_4827_T', '1', '14'); CALL create_shared_db_metadata('UNRAVEL_SHARE','SCHEMA_4827_T');Run the following procedure to share the replicated tables with the target Unravel Snowflake account ID.

CALL SHARE_TO_ACCOUNT('<Unravel Account Identifier>');

After the 180‑day data‑sharing load is complete, communicate the details to Unravel so Unravel can configure and start polling data.

Share delta metadata from Step 1 to current date.

Calculate the delta days using the dates when you ran Step 1 and when you plan to run Step 3:

delta days = (Step 3 date − Step 1 date) + 1

For example, if Step 1 ran on 02‑Jan and Step 3 runs on 04‑Jan, delta days = (4 − 2) + 1 = 3. Use that delta days value in the following procedure calls (replace 3 in this example with your calculated value).

CALL REPLICATE_ACCOUNT_USAGE('UNRAVEL_SHARE','SCHEMA_4827_T', 3); CALL REPLICATE_HISTORY_QUERY('UNRAVEL_SHARE','SCHEMA_4827_T', 3); CALL WAREHOUSE_PROC('UNRAVEL_SHARE','SCHEMA_4827_T'); CALL CREATE_QUERY_PROFILE('UNRAVEL_SHARE', 'SCHEMA_4827_T', '1', '3'); CALL create_shared_db_metadata('UNRAVEL_SHARE','SCHEMA_4827_T');After the delta data sharing in Step 3, communicate to Unravel. Unravel will initiate delta data loading.

Run the following statements to create and resume Snowflake tasks for continuous sharing, using schedules that meet your requirements.

CREATE OR REPLACE TASK replicate_metadata WAREHOUSE = UNRAVELDATA SCHEDULE = 'USING CRON 0 3,9,15,21 * * * UTC' AS CALL REPLICATE_ACCOUNT_USAGE('UNRAVEL_SHARE','SCHEMA_4827_T',2); CREATE OR REPLACE TASK replicate_history_query WAREHOUSE = UNRAVELDATA SCHEDULE = '60 MINUTE' AS CALL REPLICATE_HISTORY_QUERY('UNRAVEL_SHARE','SCHEMA_4827_T',2); CREATE OR REPLACE TASK createProfileTable WAREHOUSE = UNRAVELDATA SCHEDULE = '60 MINUTE' AS CALL create_query_profile('UNRAVEL_SHARE', 'SCHEMA_4827_T', '1', '2'); CREATE OR REPLACE TASK replicate_warehouse_and_realtime_query WAREHOUSE = UNRAVELDATA SCHEDULE = '720 MINUTE' AS BEGIN CALL warehouse_proc('UNRAVEL_SHARE','SCHEMA_4827_T'); END; CREATE OR REPLACE TASK shared_db_metadata_task WAREHOUSE = UNRAVELDATA SCHEDULE = '720 MINUTE' AS BEGIN CALL create_shared_db_metadata('UNRAVEL_SHARE','SCHEMA_4827_T'); END; /** (Resume all TASKS) **/ ALTER TASK replicate_metadata RESUME; ALTER TASK replicate_history_query RESUME; ALTER TASK createProfileTable RESUME; ALTER TASK replicate_warehouse_and_realtime_query RESUME; ALTER TASK shared_db_metadata_task RESUME;When continuous data sharing is stable and validated, communicate to Unravel. Unravel will make the SaaS account live.