Databricks FAQ

Sign in to Unravel.

Click Manager > Workspace and check if the corresponding Databricks workspace is shown in the Workspace list.

Check if Spark conf, logging, and init scripts are present.

For Spark conf, check if each and every property is correct.

Refer Unravel > Workspace Manager > Cluster Configurations.

Check if content in Spark conf, logging, and init scripts are correct.

Refer Unravel > Workspace Manager > Cluster Configurations.

Refer Unravel > Workspace Manager > Cluster Configurations.

Check if spark.unravel.server.hostport is a valid address.

Check if the host/IP is accessible from the Databricks notebook.

%sh nslookup 10.2.1.4

Check if port 4043 is open for outgoing traffic on Databricks.

%sh nc -zv 10.2.1.4 4043

Check if port 4043 is open for incoming traffic on Unravel.

<see administrator>

Check if port 443 is open for outgoing traffic on Unravel

curl -X GET -H "Authorization: Bearer <token-here>" 'https://<instance-name-here>/api/2.0/dbfs/list?path=dbfs:/'

Access the

unravel.propertiesfile in Unravel and get the workspace token.Run the following to check if the token is valid and works:

curl -X GET -H "Authorization: Bearer

<token>" 'https://<instance-name>/api/2.0/dbfs/list?path=dbfs:/'

Run the following to get workspace token from

unravel_db.propertiesfile from DBFS.dbfs cat dbfs:/databricks/unravel/unravel-db-sensor-archive/etc/unravel_db.properties

Following is a sample of the output:

#Thu Sep 30 20:06:42 UTC 2021 unravel-server=01.0.0.1\:4043 databricks-instance=https\://abc-0000000000000.18.azuredatabricks.net databricks-workspace-name=DBW-xxx-yyy databricks-workspace-id=

<workspaceid>ssl_enabled=False insecure_ssl=True debug=False sleep-sec=30 databricks-token=<databricks-token>Check if the token is valid using the following command:

curl -X GET -H "Authorization: Bearer

<token>" 'https://<instance-name>/api/2.0/dbfs/list?path=dbfs:/'If the token is invalid, you can regenerate the token and update the workspace from Unravel UI > Manage > Workspace.

You can register a workspace in Unravel from the command line with the manager command.

Stop Unravel

<Unravel installation directory>/unravel/manager stop

Switch to Unravel user.

Add the workspace details using the manager command as follows from the Unravel installation directory:

source

<path-to-python3-virtual environment-dir>/bin/activate <Unravel_installation_directory>/unravel/manager config databricks add --id <workspace-id> --name<workspace-name>--instance<workspace-instance>--access-token<workspace-token>--tier<tier_option>##For example: /opt/unravel/manager config databricks add --id 0000000000000000 --name myworkspacename --instance https://adb-0000000000000000.16.azuredatabricks.net --access-token xxxx --tier premiumApply the changes.

<Unravel installation directory>/unravel/manager config apply

Start Unravel

<Unravel installation directory>/unravel/manager start

On Databricks console, go to Workspace > Settings > Admin Console > Global init scripts tab.

Click + Add.

In the Add Script text box, using an editor open and copy the contents of the following file:

dbfs cat dbfs:/databricks/unravel/unravel-db-sensor-archive/dbin/install-unravel.sh

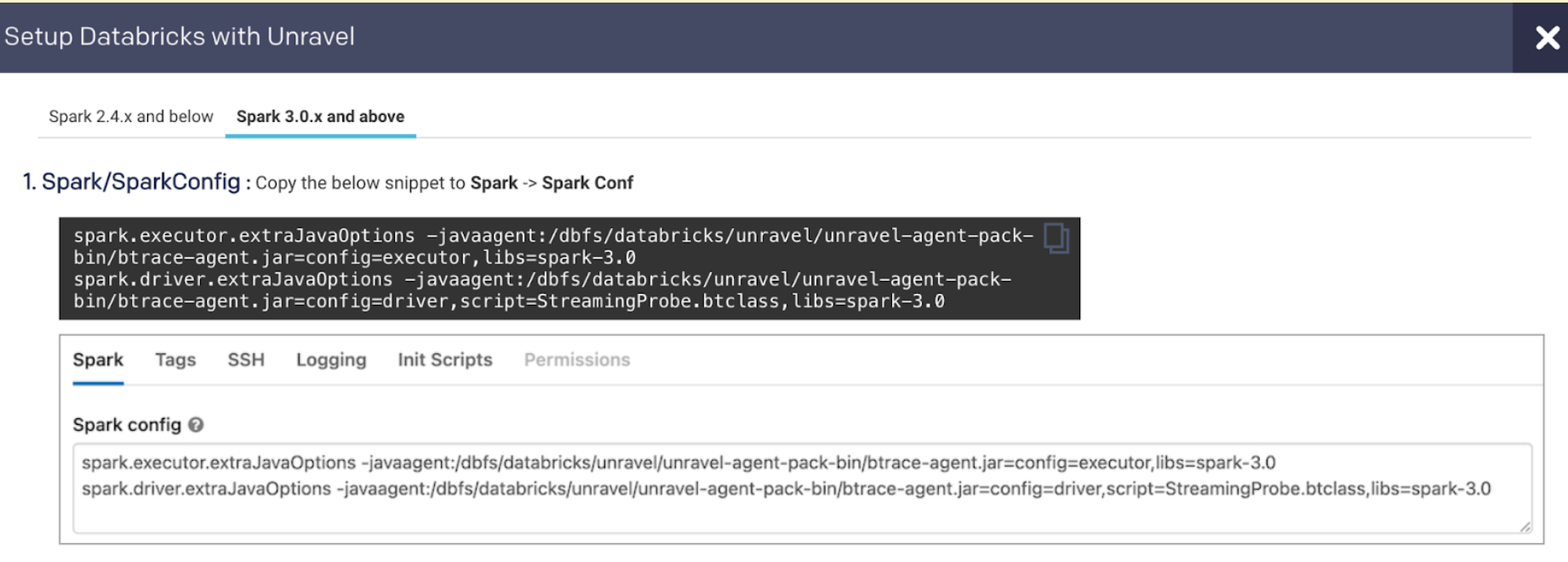

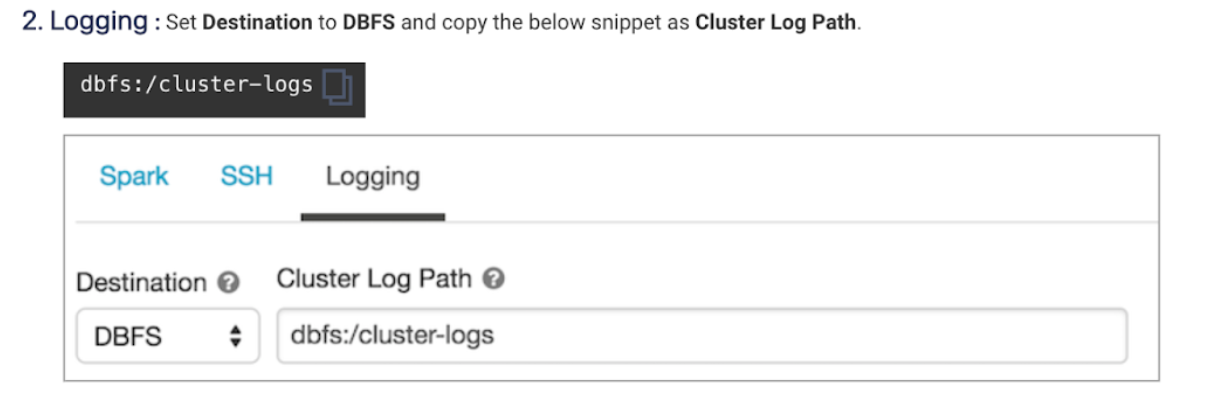

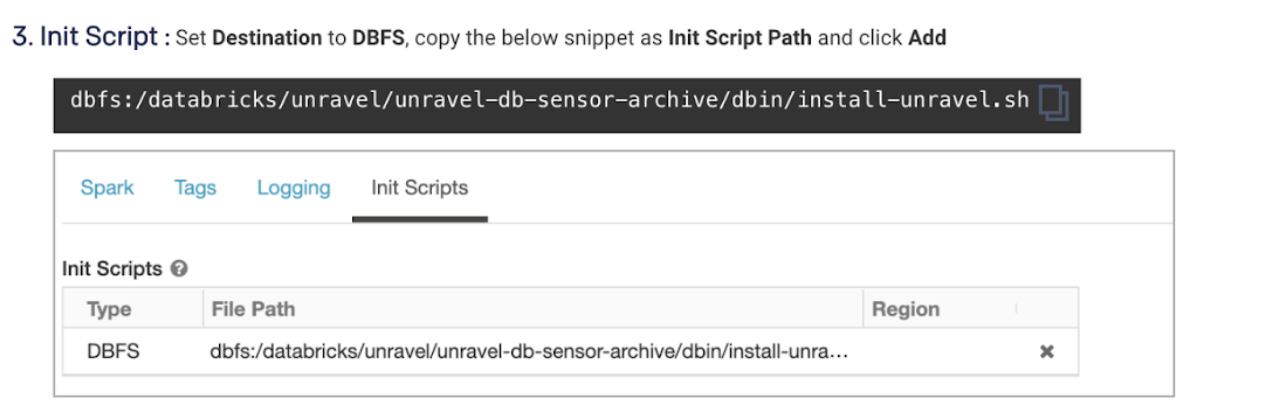

Cluster init script applies the Unravel configurations for each cluster. To setup cluster init scripts from the cluster UI, do the following:

Go to Unravel UI, click Manage > Workspaces > Cluster configuration to get the configuration details.

Follow the instructions and update each cluster (Automated /Interactive) that you want to monitor with Unravel

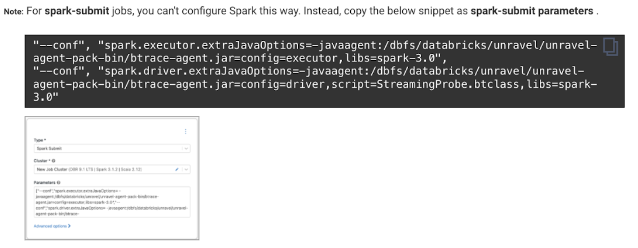

To add Unravel configurations to job clusters via API, use the JSON format as follows:

{

"settings": {

"new_cluster": {

"spark_conf": {

// Note: If extraJavaOptions is already in use, prepend the Unravel values. Also, for Databricks Runtime with spark 2.x.x, replace "spark-3.0" with "spark-2.4"

"spark.executor.extraJavaOptions": "-javaagent:/dbfs/databricks/unravel/unravel-agent-pack-bin/btrace-agent.jar=config=executor,libs=spark-3.0",

"spark.driver.extraJavaOptions": "-javaagent:/dbfs/databricks/unravel/unravel-agent-pack-bin/btrace-agent.jar=config=driver,script=StreamingProbe.btclass,libs=spark-3.0",

// rest of your spark properties here ...

...

},

"init_scripts": [

{

"dbfs": {

"destination": "dbfs:/databricks/unravel/unravel-db-sensor-archive/dbin/install-unravel.sh"

}

},

// rest of your init scripts here ...

...

],

"cluster_log_conf": {

"dbfs": {

"destination": "dbfs:/cluster-logs"

}

},

// rest of your cluster properties here ...

...

},

...g

}

}

Follow the instructions in this file to update instances and prices.

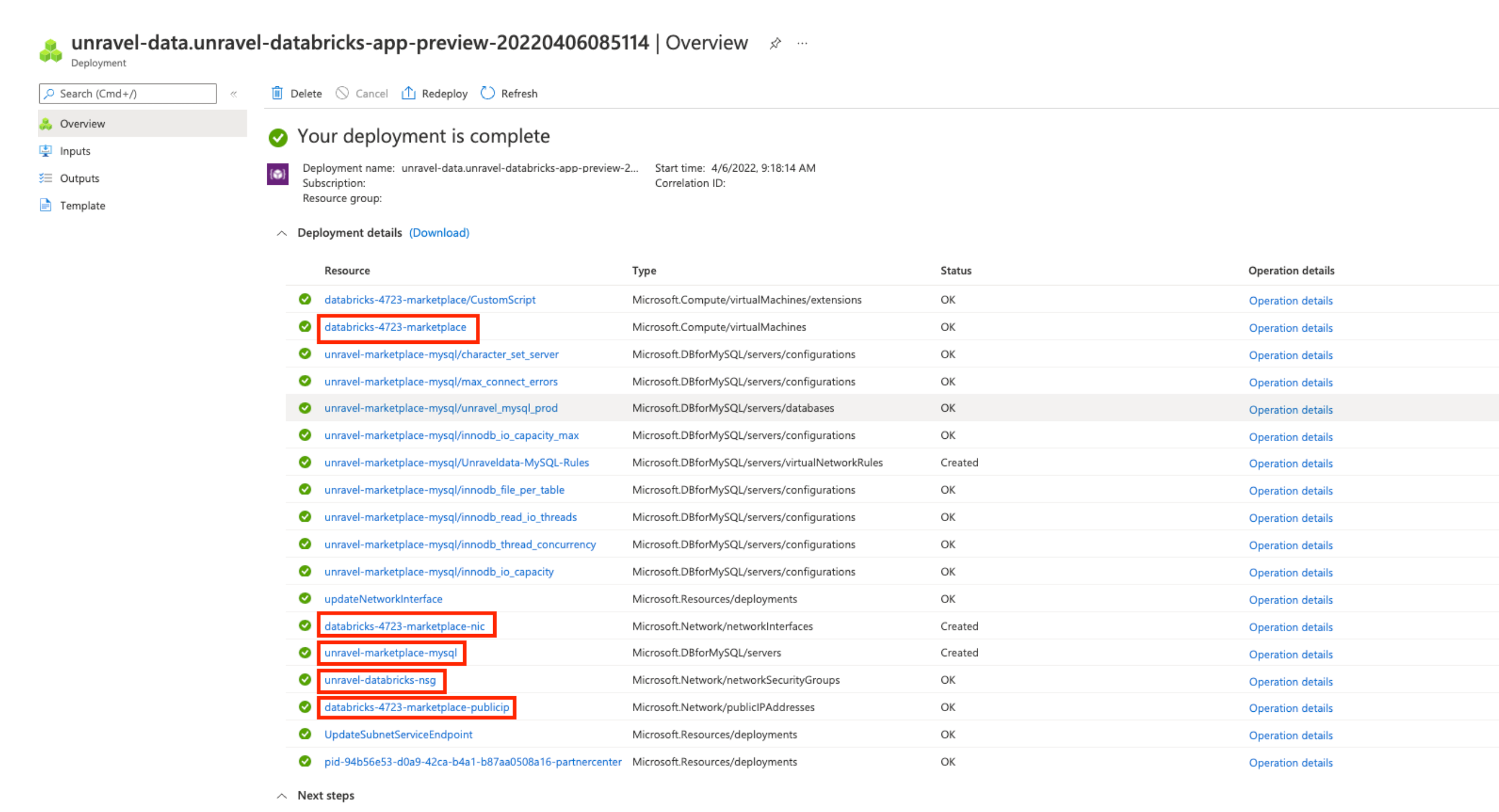

On Microsoft Azure, go to the resource group where the Unravel marketplace app is deployed and click Settings > Deployments.

Locate the deployment created by the marketplace, which appears similar to

unravel-data.unravel-databricks-app.Remove the resources highlighted in the following image: